Then I tried dilating the mask, which led to nans being inpainted. inpainted cv2.inpaint (input, mnanssmall, 3, cv2.INPAINTNS) And these are the inpainted regions: Then I tried increasing the radius to 10 which resulted in nothing being inpainted. This model card was written by: Robin Rombach and Patrick Esser and is based on the DALL-E Mini model card. Now I would like to inpaint the regions above, lets start with radius 3. Resources for more information: GitHub Repository, Paper.Ĭite as: = , Stable Diffusion is capable of generating more than just still images. Alternatively, install the Deforum extension to generate animations from scratch.

It is a Latent Diffusion Model that uses a fixed, pretrained text encoder ( CLIP ViT-L/14) as suggested in the Imagen paper. To make an animation using Stable Diffusion web UI, use Inpaint to mask what you want to move and then generate variations, then import them into a GIF or video maker. Model Description: This is a model that can be used to generate and modify images based on text prompts. See also the article about the BLOOM Open RAIL license on which our license is based. If using GIMP make sure you save the values of the transparent pixels for best results. This image has had part of it erased to alpha with gimp, the alpha channel is what we will be using as a mask for the inpainting. Download it and place it in your input folder. License: The CreativeML OpenRAIL M license is an Open RAIL M license, adapted from the work that BigScience and the RAIL Initiative are jointly carrying in the area of responsible AI licensing. In this example we will be using this image. Model type: Diffusion-based text-to-image generation model Download the weights sd-v1-5-inpainting.ckptĭeveloped by: Robin Rombach, Patrick Esser.To be clear if I generate dreams inside the 512x512 it does work, comes up with alternative inpaints.Face of a yellow cat, high resolution, sitting on a park bench it's just on the second dream that I can't get anything outside that original 512x512 space. I've checked to make sure I'm using the right inpainting model and VAE, DDIM, I've locked and unlocked the seed, etc. Adding on another overlapping "dream" like in the demo just generates black. Its called 'Image Refiner' you should look into. EDIT: There is something already like this built in to WAS. Green squares show what I’m looking to create for the image and where I want the elements to be created. Please repost it to the OG question instead. When you have the needed files downloaded and have generated an image with Stable Diffusion, click the ‘Send to inpaint’ button below the generated image(s) to start the inpaint process.

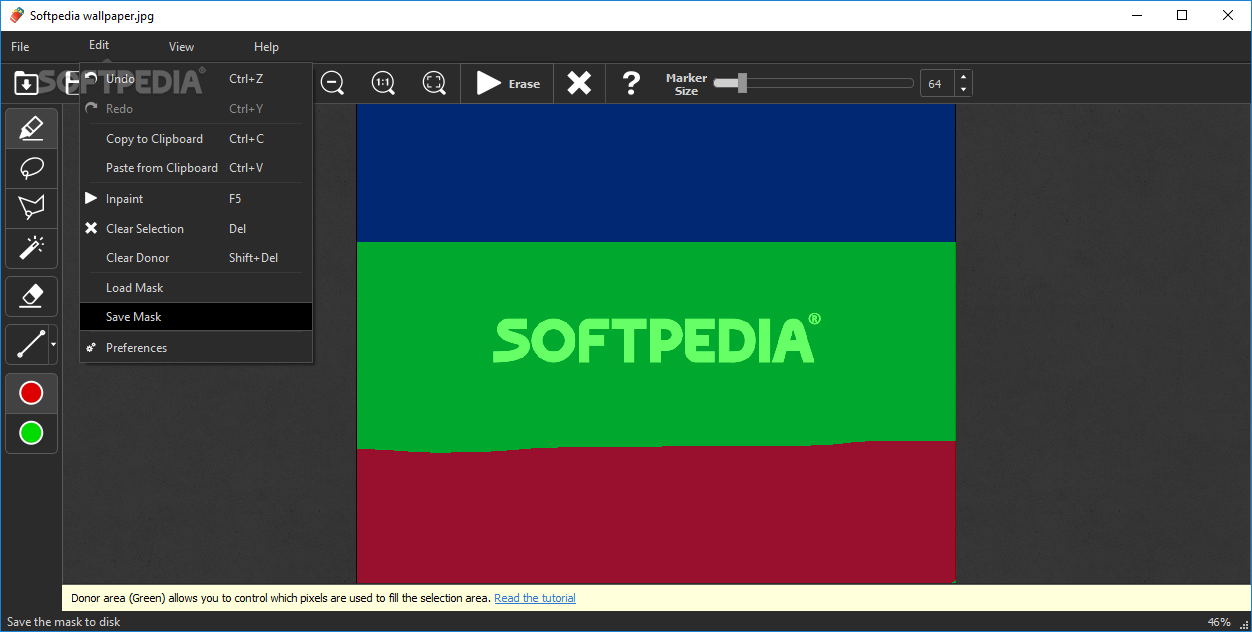

None: Inpaint each mask Merge: Merge all masks and inpaint Merge and Invert: Merge all masks and Invert, then inpaint: Applied in this order: x, y offset erosion/dilation merge/invert. I am very well aware of how to inpaint/outpaint in comfyui - I use Krita. Install (from Mikubill/sd-webui-controlnet) Open 'Extensions' tab. Got it running and installed, and it generates/"dreams" the first 512x512, but even after accepting the generated image everything is limited to that 512x512 original render space. You must be mistaken, I will reiterate again, I am not the OG of this question. I'm struggling with what's clearly PEBKAC, but any tips would be appreciated. If I don't allow the two to touch then I can "dream" over and over on empty canvas, but if there's any overlap at all then it generates just a black image. Thanks to everyone for trying it out and my eternal appreciation to openOutpaint's collaborators :DĮDIT/UPDATE: It only generates black when overlapping with another existing render. There's a moderately outdated recently updated full usage manual (and a step-by-step guide that kinda sucks) at (and respectively) If you're using it in a colab (particularly TheLastBen's?) and are experiencing 500 errors, 404 index.html not found, etc there's a fix you can apply manually for the time being there's an issue opened on thelastben's repo for it as well but see here for details You'll need to set your COMMANDLINE_ARGS value to include -api if using the extension, and set appropriate values for -cors-allow-origins if using it standalone but i can pretty heartily recommend just using the extension in most cases :) So yeah, to preface, it's vital to use an inpainting-aware model - they're specifically trained with additional unet channels and an understanding of masking.īeyond that, outpainting is handled through the dream (txt2img) tool - the img2img tool can definitely inpaint but will drop flat black on transparent pixels and is unusable for outpainting.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed